Recent developments in evidence-based decision making in the nonprofit/social sector. Practical ways to discover and exchange evidence-based insights. References, resources, and links to organizations with innovative programs.

Data-Driven is No Longer Optional

Whether you’re the funder or the funded, data-driven management is now mandatory. Evaluations and decisions must incorporate rigorous methods, and evidence review is becoming standardized. Many current concepts are modeled after evidence-based medicine, where research-based findings are slotted into categories depending on their quality and generalizibility.

SIF: Simple or Bewildering? The Social Innovation Fund (US) recognizes three levels of evidence: preliminary, moderate, and strong. Efforts are being made to standardize evaluation, but they’re recognizing 72 evaluation designs (!).

What is an evidence-based decision? There’s a long answer and a short answer. The short answer is it’s a decision reflecting current, best evidence: Internal and external sources for findings; high-quality methods of data collection and analysis; and a feedback loop to bring in new evidence.

On one end of the spectrum, evidence-based decisions bring needed rigor to processes and programs with questionable outcomes. At the other end, we risk creating a cookie-cutter, rubber-stamp approach that sustains bureaucracy and sacrifices innovation.

What’s a ‘good’ decision? A ‘good’ decision should follow a ‘good’ process: Transparent and repeatable. This doesn’t necessarily guarantee a good result – one must judge the quality of a decision process separately from its outcomes. That said, when a decision process continues to deliver suboptimal results, adjustments are needed.

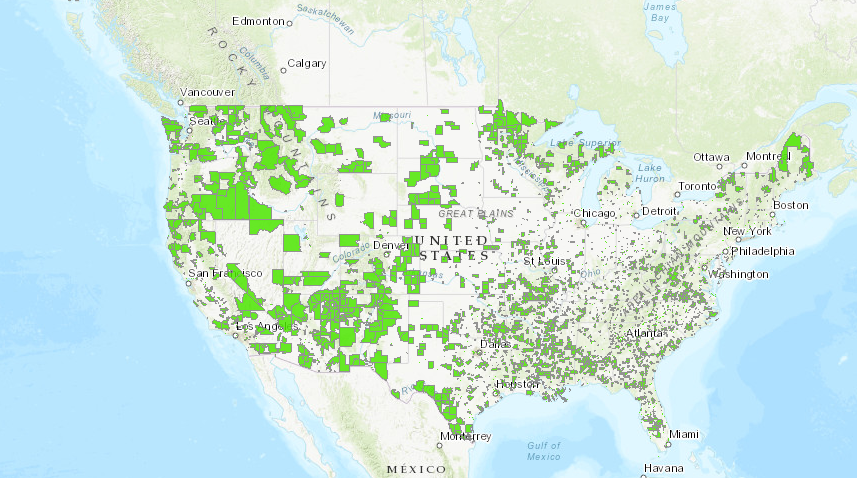

Where does the evidence come from? Many organizations have relied on gathering their own evidence, but are now overwhelmed by requirements to support decision processes with data. Marketplaces for evidence are emerging, as the Social Innovation Research Center‘s Patrick Lester explains. There’s a supply and a demand for rigorous evidence on the performance of social programs.

Avoid the GPOC (Giant PDF of Crap). Standardized evidence is already happening, but standardized dissemination of findings – communcating results – is still mostly a free-for-all. Traditional reports, articles, and papers, combined with PowerPoints and other free-form presentations, make it difficult to exchange evidence systematically and quickly.

Practical ways to get there. So how can a nonprofit or publicly financed social program compete?

- Focus on what deciders need. Before launching efforts to gather evidence, examine how decisions are being made. What evidence do they want? Social Impact Bonds, a/k/a Pay for Success Bonds, are a perfect example because they specify desired outcomes and explicit success measures.

- Use insider vocabulary. Recognize and follow the terminology for desired categories of evidence. Be explicit about how data were collected (randomized trial, quasi-experimental design, etc.) and how analyzed (statistics, complex modeling, …).

- Live better through OPE. Whenever possible, use Other People’s Evidence. Get research findings from peer organizations, academia, NGOs, and government agencies. Translate their evidence to your program and avoid rolling your own.

- Manage and exchange. Once valuable insights are discovered, be sure to manage and reuse them. Trade/exchange them with other organizations.

- Share systematically. Follow a method for exchanging insights, reflecting key evidence categories. Use a common vocabulary and a common format.

Resources and References

Don’t end the Social Innovation Fund (yet). Angela Rachidi, American Enterprise Institute (@AngelaRachidi).

Why Evidence-Based Policymaking Is Just the Beginning. Susan Urahn, Pew Charitable Trusts.

Alliance for Useful Evidence (UK). How do charities use research evidence? Seeking case studies (@A4UEvidence).

Social Innovation Fund: Early Results Are Promising. Patrick Lester, Social Innovation Research Center, 30-June-2015. “One of its primary missions is to build evidence of what works in three areas: economic opportunity, health, and youth development.” Also, SIF “could nurture a supply/demand evidence marketplace when grantees need to demonstrate success” (page 27).

What Works Cities supports US cities that are using evidence to improve results for their residents (@WhatWorksCities).

Urban Institute Pay for Succes Initiative (@UrbanInstitute). “Once strategic planning is complete, jurisdictions should follow a five step process that uses cost-benefit analysis to price the transaction and a randomized control trial to evaluate impact.” Ultimately, evidence will support standardized pricing and defined program models.

Results 4 America works to drive resources to results-driven solutions that improve lives of young people & their families (@Results4America).

How to Evaluate Evidence: Evaluation Guidance for Social Innovation Fund.

Evidence Exchange within the US federal network. Some formats are still traditional papers, free-form, big pdf’s.

Social Innovation Fund evidence categories: Preliminary, moderate, strong. “This framework is very similar to those used by other federal evidence-based programs such as the Investing in Innovation (i3) program at the Department of Education. Preliminary evidence means the model has evidence based on a reasonable hypothesis and supported by credible research findings. Examples of research that meet the standards include: 1) outcome studies that track participants through a program and measure participants’ responses at the end of the program…. Moderate evidence means… designs of which can support causal conclusions (i.e., studies with high internal validity)… or studies that only support moderate causal conclusions but have broad general applicability…. Strong evidence means… designs of which can support causal conclusions (i.e., studies with high internal validity)” and generalizability (i.e., studies with high external validity).

Posted by Tracy Allison Altman on 21-Oct-2016.